Gemma 4 AI: The Powerful New Model Changing How We Use AI

Artificial intelligence continues to evolve at a rapid pace, and new models are making advanced capabilities more accessible than ever. Among these innovations, Gemma 4 has emerged as a powerful open-weight AI model designed to balance performance, flexibility, and efficiency.

Unlike traditional cloud-dependent systems, Gemma 4 offers the ability to run locally while still delivering strong results in text generation, reasoning, and coding. This makes it especially appealing for developers and creators who want more control over their workflows.

In this article, we'll explore what Gemma 4 is, its key capabilities, real-world use cases, and how it fits into modern AI workflows-especially when combined with visual tools for creating high-quality content.

Part 1: Gemma 4 Explained: A New Generation of AI Models

Gemma 4 is a new generation of open-weight AI models developed by Google, designed to balance performance, efficiency, and accessibility. Unlike traditional models that rely heavily on cloud infrastructure, it can run across different environments—from data centers to local devices like laptops and even mobile phones.

A key advantage of Gemma 4 is its Apache 2.0 open-weight design, which allows developers to freely use, modify, and deploy it in commercial projects without heavy restrictions. This makes it a practical choice for building real-world AI applications.

Rather than being a single model, Gemma 4 is a family of models optimized for different needs:

- Lightweight models (E2B / E4B) for edge and mobile devices

- Mid-range models (26B MoE) for balanced performance

- High-performance models (31B) for more complex tasks

In addition, Gemma 4 introduces multimodal capabilities, allowing it to work with not just text, but also images—and in some versions, audio and video. This makes it more flexible for modern AI workflows that go beyond simple text generation.

To ensure safer use in real-world scenarios, Gemma 4 is evaluated through both automated systems and human review. These checks are designed to reduce harmful outputs, such as unsafe, abusive, or misleading content, making the model more reliable for production use.

Part 2: Core Capabilities of Gemma 4 You Should Know

At its core, Gemma 4 is built to handle more than just text. It’s designed as a flexible AI model that can work across different types of content and tasks, which is why both developers and creators are starting to use it in real workflows—not just experiments.

Multimodal Understanding

Unlike traditional models that only deal with text, Gemma 4 can also take in audio, images, and even short video clips (depending on the version). For instance, the E2B and E4B models can turn speech into text or translate spoken content into another language. In real use, this means you can drop in a short audio clip and quickly get a transcript or translation without extra tools. Most audio inputs are kept within about 30 seconds, and video is processed as a sequence of frames for short clips.

Image Understanding

Gemma 4 is also quite capable when it comes to images. It can pick up on objects, layouts, and even text inside visuals. This includes things like reading text from screenshots (OCR), understanding charts, or pulling information from PDFs and documents. So instead of manually reviewing a file, you can simply upload it and let the model extract or summarize what matters.

Advanced Reasoning and Agentic Workflows

What makes Gemma 4 more powerful is how it handles complex tasks. It doesn’t just respond—it can break problems down and work through them step by step. This makes it useful for multi-step workflows, automation, or anything that requires a bit of planning instead of a quick answer. You can also adjust how deeply it “thinks,” depending on the task.

Function Calling

Another practical feature is function calling. In simple terms, this allows Gemma 4 to connect with external tools or APIs and actually take action, not just generate text. For example, it could fetch data, trigger a process, or pass structured output to another system, which is essential for building AI agents or automated pipelines.

Coding Capabilities

If you’re working with code, Gemma 4 can help there too. It can generate code from scratch, complete unfinished snippets, or help debug issues. This makes it useful for everything from quick scripts to more complex development tasks.

Long Context Window (Up to 256K Tokens)

One standout feature is how much information it can handle at once. Smaller versions support up to 128K tokens, while larger ones go up to 256K. In practice, that means you can feed in long documents, keep extended conversations going, or build retrieval-based workflows without constantly losing context.

Interleaved Multimodal Input

Gemma 4 also lets you mix text and images within the same prompt. This might sound simple, but it makes interactions feel much more natural. For example, you can upload an image and ask questions about it in the same request, instead of handling everything separately.

Local Deployment and Efficiency

Another advantage is that Gemma 4 is designed to run efficiently on different types of hardware, including local devices like laptops. This can help reduce costs, improve speed, and keep sensitive data on-device instead of sending everything to the cloud.

Multilingual Support (140+ Languages)

The model also supports a wide range of languages, making it useful for global use cases. Whether it’s translating content, localizing products, or creating multilingual material, it can handle different languages without much extra setup.

Fine-Tuning and Customization

Since Gemma 4 is open-weight, it can be customized for specific needs. Developers can fine-tune it with their own data, adapt it to niche industries, or optimize it for particular tasks, which makes it more flexible than many closed models.

Part 3: How Developers and Creators Use Gemma 4

The real value of Gemma 4 shows up in how it’s used in everyday workflows. From writing content to automating tasks, it works as a flexible AI assistant across different scenarios.

Content Creation & SEO: Generate blog posts, outlines, and optimized content faster while keeping tone and structure consistent.

Coding & Development: Write, improve, and debug code, or get quick explanations for technical problems during development.

Automation & AI Agents: Power chatbots and automated workflows that handle repetitive tasks or user interactions.

Creative Brainstorming: Quickly generate ideas for articles, designs, or campaigns when you need inspiration.

Knowledge Management: Summarize documents, organize information, and make large datasets easier to navigate.

In short, Gemma 4 acts as an “AI layer” that helps speed up both creative and technical work.

Part 6: How to Use Gemma 4 (Step-by-Step Guide)

Getting started with Gemma 4 is fairly simple. You can access it through different platforms depending on your needs—whether you're testing, building apps, or running it locally.

Step 1: Choose Where to Access Gemma 4

First, decide how you want to use Gemma 4. You can try it through platforms like pip install -U transformers torch accelerate for quick testing, or all Gemma 4 models with the latest version of Transformers. Developers can also run Gemma 4 locally depending on the model size and hardware setup.

Step 2: Load the Model

Once you have everything installed, you can proceed to load the model with the code below:

from transformers import AutoProcessor, AutoModelForCausalLM

MODEL_ID = "google/gemma-4-31B-it"

# Load model

processor = AutoProcessor.from_pretrained(MODEL_ID)

model = AutoModelForCausalLM.from_pretrained(

MODEL_ID,

dtype="auto",

device_map="auto"

)This setup allows you to quickly initialize the model and start building your own workflows.

Step 3: Enter Your Prompt or Input

Next, provide your input. This could be text, an image, or even audio (for supported versions). For best results, keep your prompt clear and specific—for example, ask for a summary, translation, or code generation instead of a vague request.If you're working with audio, you can use a structured prompt like this:

Transcribe the following speech segment in {LANGUAGE} into {LANGUAGE} text.

Follow these specific instructions for formatting the answer:

* Only output the transcription, with no newlines.

* When transcribing numbers, write the digits (e.g., 1.7 instead of "one point seven", and 3 instead of "three").Using structured prompts like this helps improve accuracy and keeps the output consistent, especially for transcription or translation tasks.

Step 4: Refine and Iterate

After getting a result, you can refine your prompt or add more instructions to improve the output. Gemma 4 works best when you iterate—adjusting details step by step until you get the result you need.

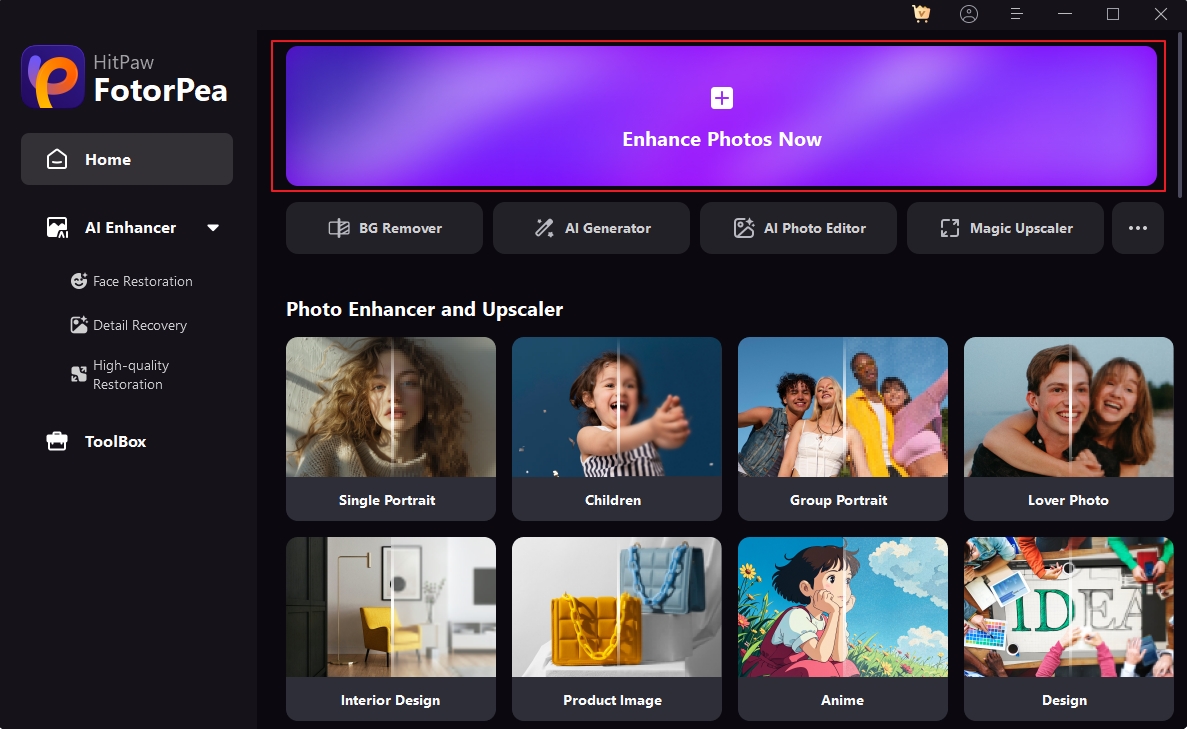

Part 5: Build Better AI Workflows for Images Beyond Gemma 4

While Gemma 4 is highly effective for generating text, ideas, and structured outputs, it does not directly create or enhance visual content. In real-world workflows, especially in content creation, visuals are just as important as text.

To build a complete AI workflow, combining language models with visual tools is essential. Tools like HitPaw FotorPea help bridge this gap by enabling users to generate and enhance images quickly and efficiently.

Key Features of HitPaw FotorPea

- Enhance any image with 20+ AI models

- Upscale images to high resolution

- Restore faces with natural detail

- Denoise and sharpen in one click

- Generate images from text prompts

- Process multiple images in batch

How to Use HitPaw FotorPea

Step 1: Upload your image on HitPaw FotorPea and click on AI enhancer.

Step 2: Choose an AI model or enhancement mode.

Step 3: Adjust settings such as resolution or style.

Step 4: Generate or enhance the image.

Step 5: Download the final result.

Why It Matters

By combining tools like Gemma 4 with visual AI solutions, you can create a seamless workflow:

Idea → Text → Image → Final Content

This approach improves efficiency, enhances creativity, and allows you to produce professional-quality results without advanced design skills.

Part 6. Gemma 3 vs. Phi 4

To better understand how these models differ in real-world usage, here’s a more concrete comparison of Gemma 3 and Phi 4 across key capabilities:

- Developer: Google DeepMind

- Model Type: Open-weight, supports local + cloud deployment

- Model Size Range: ~2B to 27B parameters

- Context Length: Up to ~128K tokens (depending on variant)

- Multimodal: Text + image understanding supported

- Performance: Strong general reasoning, coding, and content generation

- Deployment: Works on local GPUs, servers, and cloud environments

- Customization: Supports fine-tuning and domain adaptation

- Use Cases: Content creation, coding, and AI workflows

- Best For: Developers needing flexibility and scalable performance

- Developer: Microsoft

- Model Type: Lightweight, efficiency-first design

- Model Size: ~14B parameters (optimized architecture)

- Context Length: ~32K–64K tokens

- Multimodal: Primarily text-based (limited multimodal support)

- Performance: Optimized for fast inference and low-latency tasks

- Deployment: Ideal for edge devices and resource-constrained environments

- Customization: Limited fine-tuning compared to open-weight models

- Use Cases: Lightweight applications and mobile AI tasks

- Best For: Users prioritizing speed, efficiency, and low resource usage

FAQs of Gemma 4

Gemma 4 is used for tasks such as content generation, coding assistance, reasoning, and workflow automation. It is especially useful for developers and creators who need flexible AI solutions.

No, Gemma 4 primarily focuses on text-based tasks. To generate or enhance images, additional AI tools like HitPaw FotorPea are required as part of a complete workflow.

AI-powered image tools can help generate visuals, enhance quality, and apply different styles. These tools are commonly used alongside language models to create complete content.

Conclusion

Gemma 4 represents a significant step forward in making AI more flexible, accessible, and customizable. With strong capabilities in text generation, reasoning, and coding, it serves as a powerful foundation for modern AI workflows.

However, to unlock its full potential, it's important to combine it with tools that handle visual content. By integrating image generation and enhancement solutions like HitPaw FotorPea, users can create a complete workflow that covers both text and visuals.

This combination allows you to work faster, produce higher-quality content, and fully leverage the power of AI in creative and professional projects.

Leave a Comment

Create your review for HitPaw articles