DaVinci-MagiHuman Review: Features, Performance & AI Video Creation

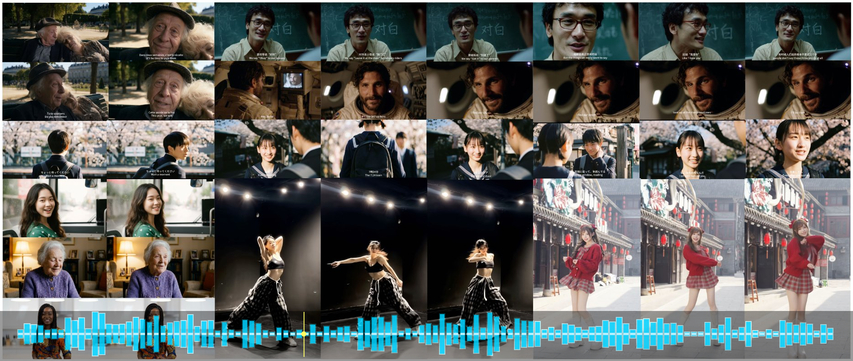

DaVinci-MagiHuman is an advanced open-source AI video model for video and audio generation from text, in one cohesive pipeline. Instead of generating audio and video distributed as two separate outputs like most AI video tools, DaVinci-MagiHuman synthesizes audio and visual together in one Transformer structure, delivering human-centric results with natural lip-sync, emotive facial performance, and lifelike motion.

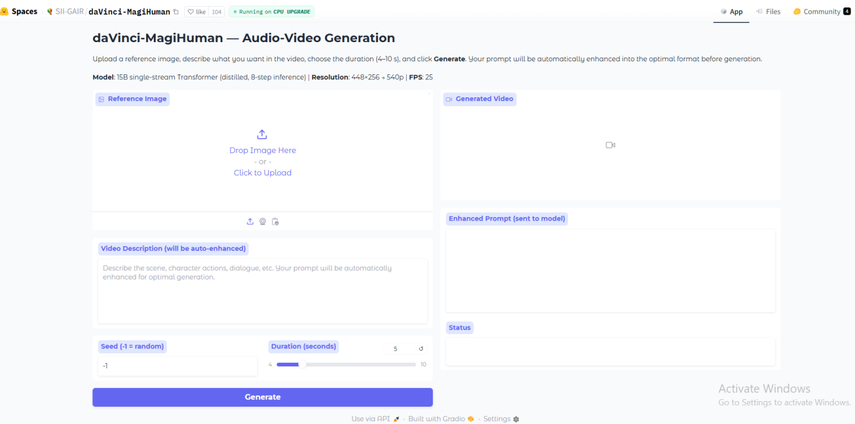

The model is available in six languages: English, Chinese, Japanese, Korean, German, French, and runs at a speed that enables iterative content creation. This is really how it should be! This review will cover its architecture, benchmarks, practical applications, and top it all off with HitPaw VikPea to produce professional, sleek, social, or commercial output.

Part 1: DaVinci-MagiHuman Key Features That Make It Revolutionary

Overview

DaVinci-MagiHuman offers a number of features that are far from being just incremental upgrades to what is currently offered from AI video tools.

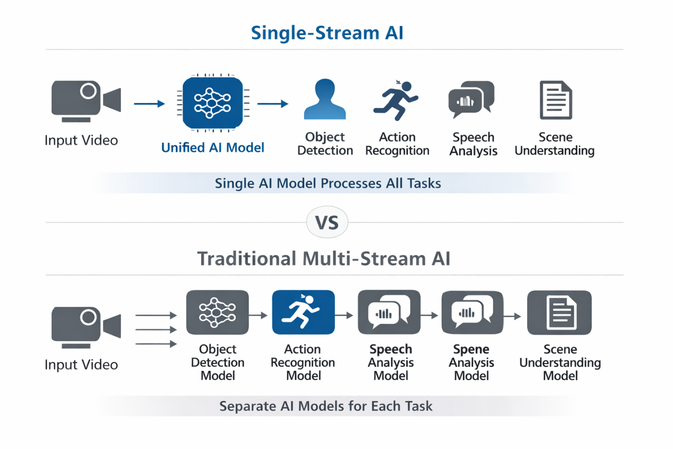

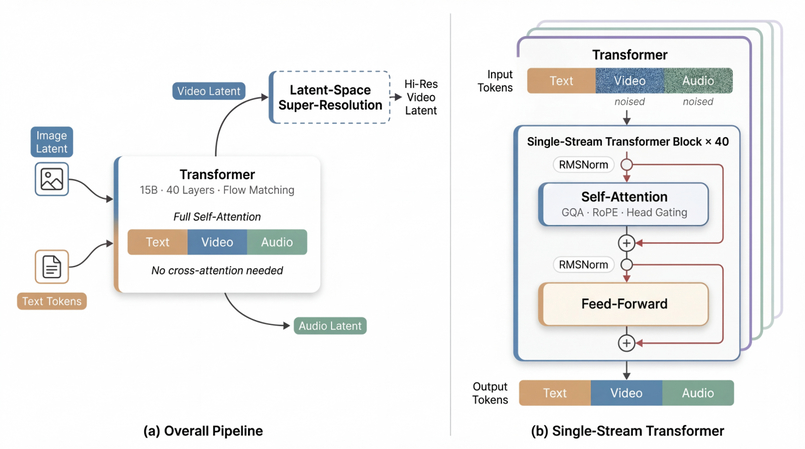

It single-streams the Transformer, as opposed to most competitors that are multi-stream. Instead of separately processing video and audio, then combining them, DaVinci-MagiHuman runs on text, video, and audio tokens simultaneously - making the speech perfectly synchronized with lip-sync and coherent gestures without the need for blending separate outputs.

Top Features of DaVinci-MagiHuman

- Unified Transformer: Text, video, and audio are processed in a single self-attention stream (no complicated cross-attention).

- Human-Centric Output: Creates realistic avatars with natural expressions and high-quality audio-video sync.

- High Speed of Inference: Generates a 5s 1080p video in ~38s with a two-stage (low-res → refined) pipeline.

- Languages: English, Chinese, Japanese, Korean, German, and French are supported.

- Open Source: Everything is in the source (the base, distilled, super-resolution, and the codebase).

- High Success Level: Surpassing the competition with up to 80% success in human evaluations.

This is not surprising, as the model has been specifically tuned towards human-centric quality: expressive faces, natural speech delivery, and realistic body movement. Multilingual functionality for all 6 languages is integrated into the base model, which is not an add-on, so pronunciation and lip-sync quality are consistent across languages.

And performance at single H100 is very good - 5-second 256p video takes 2 seconds, 540p takes 8 seconds, 1080p takes 38 seconds.

Part 2: DaVinci-MagiHuman Architecture Explained for Creators

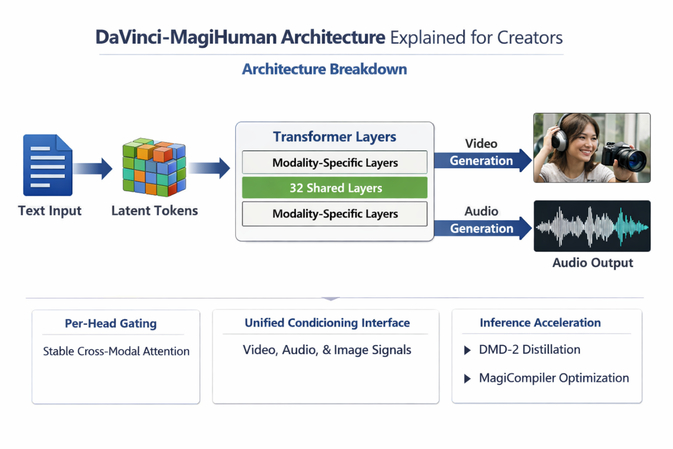

Architecture Breakdown

Knowing the architecture will give you a better understanding of the model use. DaVinci-MagiHuman adopts a sandwich design: the first and last 4 Transformer layers are modality-specific (video, audio, and text are processed independently), the middle 32 layers are fully shared among all modalities. This enables the model to capture deep cross-modal connections and, at the same time, maintain the specific characteristics of each output type.

Instead of using explicit timestep signals to direct the denoising - like conventional diffusion models - DaVinci-MagiHuman predicts the denoising direction from the input latents themselves. Per-head gating stabilizes attention between modalities and prevents any unwanted instability when video and audio tokens are processed through the same shared layers. A common conditioning interface receives video, audio, and reference image signals as a single minimal interface, making both inference and fine tuning easier.

Two other methods accelerate inference: DMD-2 distillation decreases the number of denoising steps, and MagiCompiler conducts operator-level hardware optimization. Together, these enable sub-40-second 1080p renders on H100 hardware.

Part 3: DaVinci-MagiHuman Performance & Benchmarks You Can Trust

Benchmark Results

The benchmark results of DaVinci-MagiHuman are outstanding in all aspects. During human preference tests, it has an 80% win rate over OVI 1.1 and a 60.9% win rate over LTX 2.3 - real people are consistently more pleased with their outputs. Its Word Error Rate (WER) of 14.6% is lower than both competitors, leading to more intelligible and text-accurate speech.

| Metric | DaVinci-MagiHuman | OVI 1.1 | LTX 2.3 |

|---|---|---|---|

| Human Win Rate | 80% / 60.9% | Baseline | Baseline |

| Word Error Rate | 14.6% | Higher | Higher |

| 256p Speed | 2 sec | Slower | Slower |

| 1080p Speed | 38 sec | Slower | Slower |

| Integrated Audio | Yes | No | No |

| Open-Source | Yes | Partial | No |

Note: Speed benchmarks measured on a single NVIDIA H100 GPU.

The overall image is stunning: DaVinci-MagiHuman is both faster and better quality than its nearest open-source equivalents. For creators of content, that means more iterations per hour and fewer fixes in post before content is ready to publish.

Part 4: DaVinci-MagiHuman Practical Applications - Real-World Use Cases

Use Cases

DaVinci-MagiHuman lends itself to a number of practical real-life related artistic and business use cases:

- Generated content storytelling: Create short films or animated video logs with expressive AI human characters.

Sample prompt: "Thirty-something woman talks to camera in cozy cafe: 'I never thought this city would feel like home, but here I am.'" - Localize content: Create the same video in different languages from one single model. Six-language campaigns that used to be six separate shoots are now six text prompts.

- Rapid prototyping: Run multiple ad scripts/creative concepts in a single afternoon. At 2 seconds per 256p render, iteration is quick.

Sample prompt: "An enthusiastic professional: 'Our platform isn't just about saving you time - it's about changing how your team works together.'" - Digital avatars and AI presenters: Create reusable AI spokesperson personas for training videos, product demos, or internal communications - no scheduling, no re-shoots.

What You Can Build With MagiHuman

- Digital Avatars and Virtual Presenters

- Content Localization at Scale

- Interactive Entertainment

- Marketing and Advertising

- Podcast and Video Content

Users do not need to show up on camera and get everything ready to upload. Enhancements are also needed to polish videos and get professional quality.

Note: Full-resolution output requires H100-class GPU hardware. Cloud services such as RunPod, Vast.ai, or Google Colab Pro offer convenient options for artists who don't have high-end GPUs locally.

Part 5: Enhance Your DaVinci-MagiHuman Videos with HitPaw VikPea

Why Enhancement Matters

DaVinci-MagiHuman produces amazing results, but AI-based video usually has some artifacts - minor blurring or noise around edges, banding or color inconsistency - that can be cleaned up via post-processing if they're going to be used commercially or professionally. These are the subtle imperfections that can affect the perceived quality of your videos, especially when viewed on client-facing or high resolution formats, thus making enhancement an important final step in the video workflow.

What Can HitPaw VikPea Do for You?

HitPaw VikPea is an AI video upscaler tool that is made for this exact purpose with the best upscaling, denoising and detail recovering models to process videos generated by AI. It can enhance facial features, correct color tones, and eliminate compression artifacts, so you can transform the raw results to clean and high-definition images. Combine the modularity of open-source AI generation with the professional finishing touch of commercial enhancement and you get polished, publish-ready outputs suitable for social, marketing campaigns, or client deliverables. .

Key Features of HitPaw VikPea

- AI Super-Resolution Technology: It strengthens the daVinci-MagiHuman video to 4K Ultra HD while retaining the details and lively colors.

- AI Frame Interpolation: Enhances the frame rate for more smooth and fluid moves in cinematic and animation.

- AI Denoise Engine: Eliminates unwanted noise and brings back sharpness for a more clean and professional outcome.

- Color Correction & HDR Tuning: Contrast, brightness, and color saturation are adjusted to the unique daVinci-MagiHuman video character look.

- Batch Video Upscaling Mode & Format Support: Auto Enhance Video allows to enhance multiple videos at a time, you need not to waste time for single video enhancement. It also supports a wide range of formats (MP4, MOV, AVI, MKV)

- Friendly to Use & Cross-Platform: It is an easy-to-use program compatible with Windows and macOS..

Steps to Enhance DaVinci-MagiHuman Videos with HitPaw VikPea

Step 1: Install and Download

Go to the official website and download HitPaw VikPea. After it is installed, start the application and log in when it is necessary.

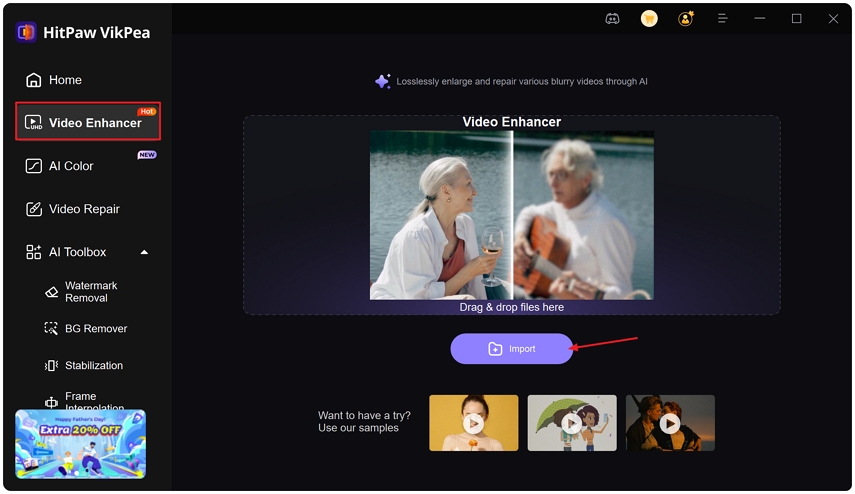

Step 2: Get Your Footage into Video Enhancer

Click on the left panel to open the Video Enhancer module. Press the icon to import your international women's day movie/clip/video file into the interface.

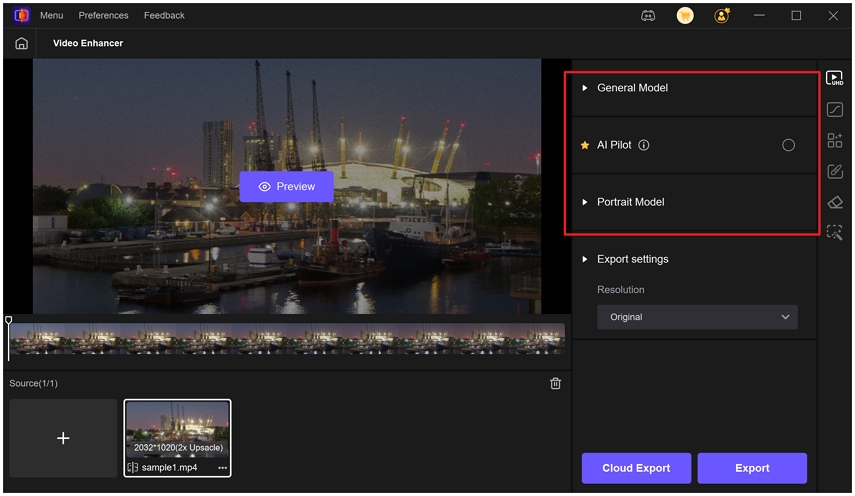

Step 3: Use the Appropriate AI Model

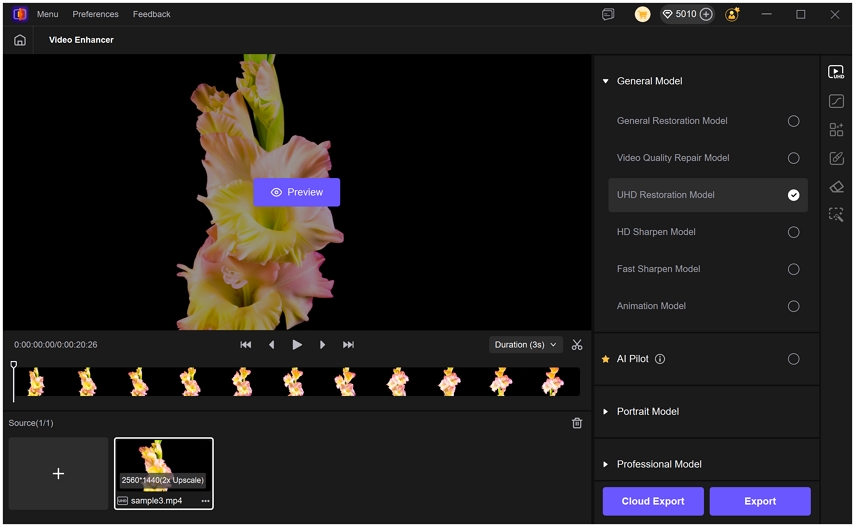

Along with a general model that applies enhancement overall, there are multiple specialized models that can be applied to the video as per particular enhancement needs.

You can apply other models like UHD Restoration Model that will further improve video quality of a high resolution 720p video, enhancing visibility and restoring sharpening.

Choose your preview length (3 or 5 sec). In case you need to improve only a few elements of the video, use the Cut tool. Fix the output resolution and format.

Tips: In case you are not sure what model to use, use AI Pilot. It will automatically examine your video and advise the most suitable enhancement.

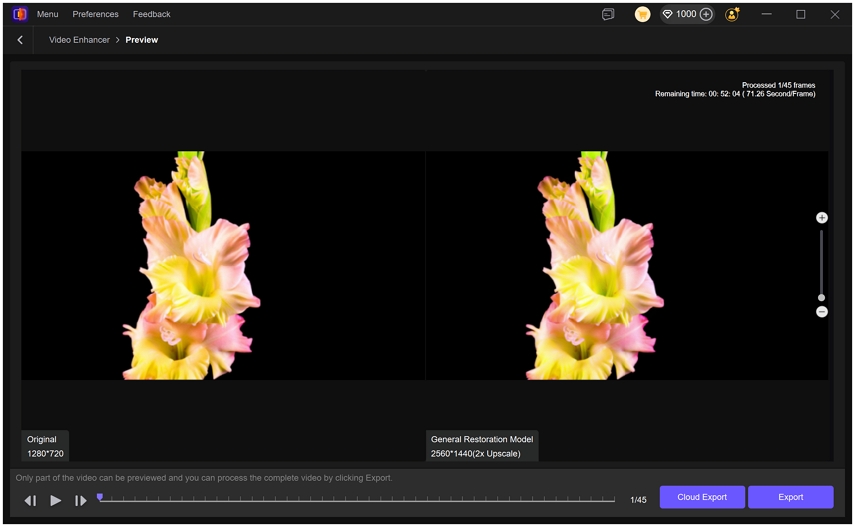

Step 4: Preview and Save

After making all necessary adjustments, click on Preview to compare the before-and-after results of your video. This lets you clearly see the difference between the original and the enhanced version before finalizing.

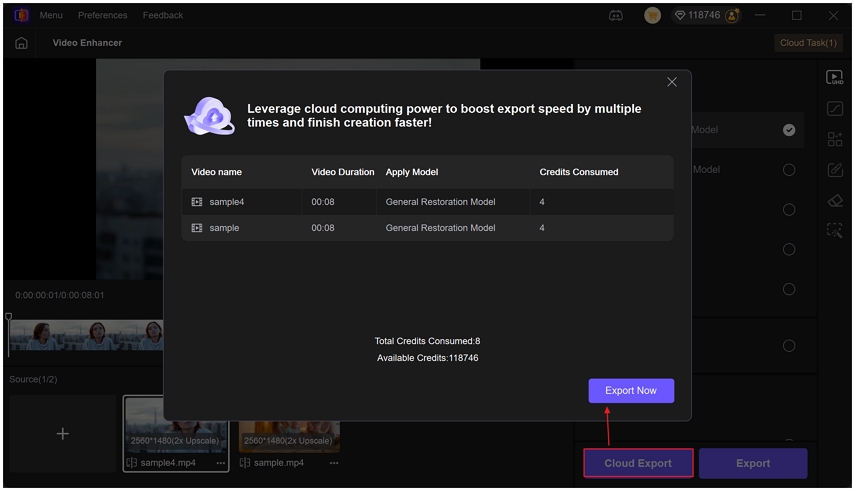

Step 5: Export or Cloud Export

Once satisfied with the preview, select Export or Cloud Export to save your video. Enjoy enhanced videos with stunning clarity.

FAQs About DaVinci-MagiHuman

Yes, it is all open-source without the hassle of licensing. But you need NVIDIA H100-class hardware to run 1080p. Those without local GPUs can run on cloud services like RunPod or Vast.ai at a cost for computing. Low-resolution (256p) outputs can be run on less powerful hardware.

Some basic terminal commands are helpful when setting up a running an inference script, but you don't have to be a full-fledged coder. The open source community is iterating on more user friendly wrappers. For those who don't code, cloud-hosted demos offer the fastest path forward.

Yes. DaVinci-MagiHuman natively supports English, Chinese, Japanese, Korean, German, and French with correct pronunciation and lip-sync in each language, which makes it one of the best open-source solutions for multi-language content production.

Conclusion

DaVinci-MagiHuman is a truly groundbreaking open-source AI video release of 2026. Its integrated audio-video design, multilingual, human-touch output quality, and high-speed inference pave the way for open-source AI video generation.

Using DaVinci-MagiHuman with HitPaw VikPea closes the gap between AI-generated and professionally perfected video. Create expressive and timed multilingual content with DaVinci-MagiHuman and then process outputs with HitPaw VikPea for upscaling, denoising, and enhancing faces. The combination delivers professional results for a fraction of the cost of traditional production.

Leave a Comment

Create your review for HitPaw articles