Mastering AI Video Workflows: GPT Image 2.0 to Seedance 2.0

The evolution of generative AI has transformed digital storytelling from static concepts into cinematic realities. Mastering the AI video workflow from GPT Image 2.0 to Seedance 2.0 represents the pinnacle of modern content creation. By combining the prompt intelligence of GPT with the temporal stability of Seedance 2.0, creators can produce high-fidelity videos that maintain visual consistency. This guide explores how to bridge the gap between AI image generation and professional video synthesis to achieve results that were previously only possible for high-budget studios.

Part 1. What is the GPT Image 2.0 to Seedance 2.0 AI Video Workflow?

The GPT Image 2.0 to Seedance 2.0 AI video workflow is a professional production pipeline that uses advanced language models to design high-resolution keyframes which are then animated by a specialized video diffusion engine. This two-step process ensures that the video retains the specific artistic style, character details, and environmental lighting established in the initial image.

How GPT Image 2.0 and Seedance 2.0 AI Work Together

- Concept Architecture: GPT Image 2.0 acts as the director by interpreting complex natural language prompts into hyper-realistic, compositionally sound base images.

- Temporal Animation: Seedance 2.0 takes the static output from GPT and applies motion physics to simulate realistic movement across time.

- Visual Continuity: The workflow uses the GPT image as a "source of truth" to prevent the morphing or flickering often seen in basic AI videos.

- Precision Control: Creators can iterate on the image design before committing to the more intensive video rendering phase.

- Semantic Alignment: The advanced understanding in GPT 2.0 ensures that the visual elements match the intended narrative depth of the final video.

Part 2. Why Use GPT Image 2.0 with Seedance 2.0 for Professional Video Production?

Using GPT Image 2.0 with Seedance 2.0 for professional video production provides unparalleled control over character consistency and environmental detail. This specific combination reduces the unpredictability of traditional text-to-video models by establishing a high-fidelity visual anchor, resulting in flicker-free, studio-quality animations suitable for commercial use and high-end marketing.

- Enhanced Character Stability: By starting with a high-quality GPT image, the character's facial features and clothing remain consistent throughout the entire video clip.

- Cinematic Lighting Control: GPT Image 2.0 allows for precise lighting setups that Seedance 2.0 then realistically animates with shifting shadows and reflections.

- Reduced Artifacting: The Seed-Lock technology in version 2.0 minimizes the "melting" effect, ensuring that objects maintain their structural integrity during movement.

- Cost and Time Efficiency: This workflow allows creators to approve a "keyframe" first, preventing the need for multiple expensive video re-renders.

- Professional Resolution: The synergy between these tools supports high-definition outputs that meet the technical requirements of modern social media and broadcast platforms.

Part 3. How to Use GPT Image 2.0 and Seedance 2.0 for AI Video Production?

To use GPT Image 2.0 and Seedance 2.0 for AI video production, you must first generate a detailed base image in GPT using cinematic prompt engineering. Once the image is refined, it is imported into a video generator that supports the Seedance 2.0 model, where motion parameters are set to breathe life into the static frame.

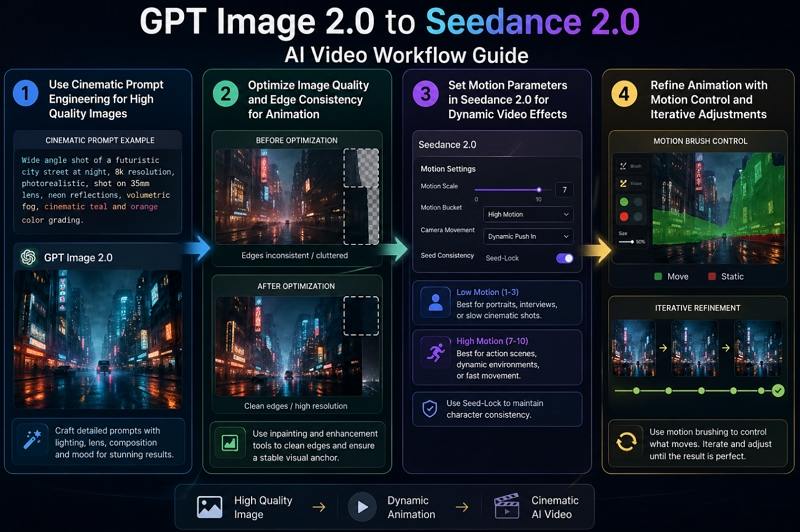

Step 1: Create Cinematic Prompts in GPT Image 2.0

Your video quality depends heavily on the initial image. When using GPT Image 2.0, focus on detailed cinematic prompts that include lighting, lens type, composition, and mood. Avoid generic descriptions and instead build rich visual context.

Example prompt structure:

Wide angle shot of a futuristic city street at night, 8k resolution, photorealistic, shot on 35mm lens, neon reflections, volumetric fog, cinematic color grading

This level of detail helps produce visually compelling frames that serve as a strong foundation for animation.

Step 2: Refine the Visual Anchor for Animation

After generating your image, refine it before moving forward. Use in painting tools to clean edges, fix inconsistencies, and enhance important areas. The goal is to create a stable visual anchor.

Seedance 2.0 relies on image boundaries to predict motion beyond the frame. Clean edges and high resolution inputs ensure smoother camera movement and more realistic animation results.

Step 3: Set Motion Parameters in Seedance 2.0

Upload your refined image into Seedance 2.0 and configure motion settings carefully. These parameters define how your static image becomes a moving scene.

- Low motion levels are ideal for portraits, interviews, or slow cinematic shots

- High motion levels work best for action scenes, dynamic environments, or fast movement

- Use seed consistency features to maintain character identity and avoid visual distortion

Balancing motion intensity is key to achieving natural and engaging results.

Step 4: Control Motion and Refine Output

Once the initial animation is generated, refine it using advanced tools like motion brushing. This allows you to control which parts of the image move and which remain static.

For example, you can animate clouds while keeping buildings still, or create subtle environmental motion without affecting the main subject. This selective control enhances realism and gives your video a professional finish.

Iteration is essential. Adjust prompts, motion settings, and refinements until the final output matches your creative vision.

Part 4. Elevate Your Workflow with HitPaw VikPea Seedance 2.0 Video Generator

For creators looking to streamline this complex process, HitPaw VikPea offers a robust and intuitive environment specifically optimized for the latest AI models. HitPaw VikPea is a comprehensive AI video solution that simplifies the transition from static assets to dynamic cinema. It integrates top-tier models like Seedance 2.0 into a unified interface, allowing both beginners and professionals to produce high-quality content without needing a deep technical background in machine learning.

- AI video generation from text or images for creative video production

- Multiple AI models including Kling VEO3 and Seedance 2.0 for diverse video styles

- Customizable resolution and duration settings for flexible output control

- Built in enhancement tools improve clarity sharpness and visual consistency

- Fast rendering engine delivers quick results for efficient content production

- Simple interface designed for beginners and advanced users alike

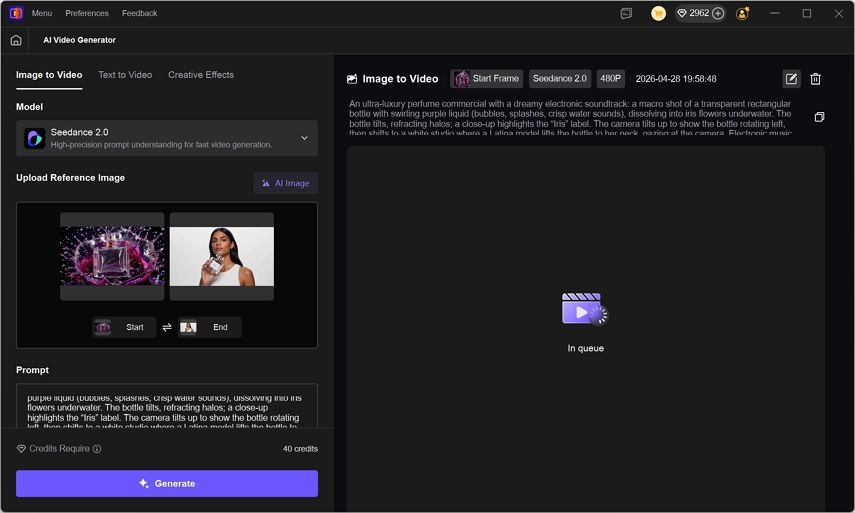

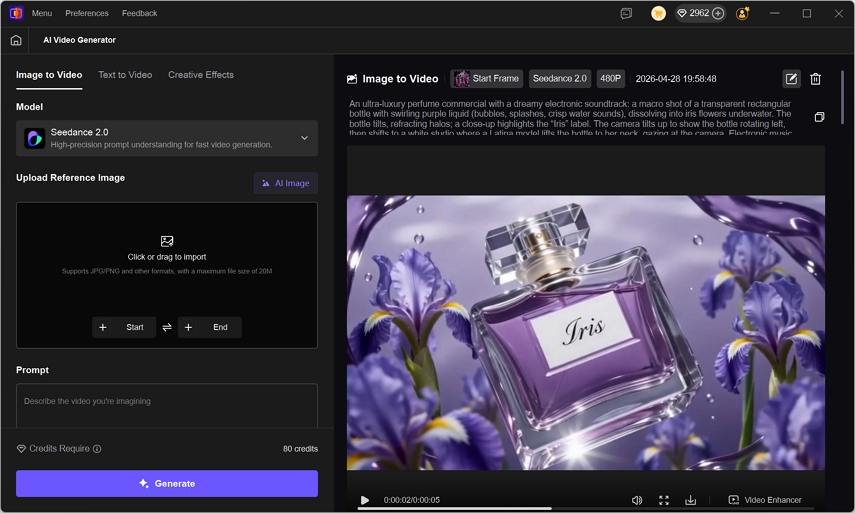

Step 1.Install and launch HitPaw VikPea on your Windows or Mac computer and choose the AI Video Generator from the dashboard.

Step 2.Select the Seedance 2.0 model for your project. Choose the Image-to-Video mode and upload the character image previously created with GPT Image 2.0. Configure your output settings by selecting the desired video duration and resolution.

Step 3.Click the Generate button to initiate the AI rendering process. Once finished, preview your video and download it or use the Video Enhancer tool to boost clarity.

Part 5. Best Practices for GPT Image 2.0 to Seedance 2.0 Workflow

The best practices for a GPT Image 2.0 to Seedance 2.0 workflow involve using high-contrast images and clear focal points to help the AI identify motion areas. Consistently using detailed descriptive keywords for both the image generation and the video motion prompts ensures the highest level of stylistic alignment.

- Use Simple Backgrounds: Avoid overly cluttered backgrounds in GPT Image 2.0 to help Seedance 2.0 focus on animating the primary subject.

- Match Motion to Subject: Set lower motion scales for portraits and higher scales for nature or action scenes to maintain realism.

- Iterative Refinement: If the video drifts, try adjusting the initial GPT image to have more distinct edges and clearer lighting.

- Prompt Synchronization: Keep your video motion prompts short and direct, referencing the specific elements you want to move in the image.

- Resolution Balancing: Generate your GPT images at the same aspect ratio as your intended video to avoid unwanted cropping or stretching.

FAQs on GPT Image 2.0 to Seedance 2.0 Workflow

The main benefit of GPT Image 2.0 to Seedance 2.0 workflow is efficiency. This workflow allows users to quickly generate visuals and convert them into videos without traditional production steps, saving time and cost while maintaining high quality results.

Yes, beginners can use these tools with basic guidance. The process involves simple steps like writing prompts and arranging images, making it accessible even without professional video editing experience.

GPT Image 2.0 to Seedance 2.0 workflow is currently best for high-quality short clips that can be edited together. By generating multiple segments using the same GPT Image 2.0 character assets, you can maintain enough consistency to build a cohesive and professional long-form story.

You can create marketing videos, educational content, storytelling videos, social media clips, and more with GPT Image 2.0 to Seedance 2.0 workflow. The flexibility of AI tools allows for a wide range of creative applications.

Conclusion

Mastering AI video workflows with GPT Image 2.0 and Seedance 2.0 provides a powerful advantage in today's content driven world. By combining intelligent image generation with automated video creation, creators can produce engaging videos faster and more efficiently. With the right workflow and tools like HitPaw VikPea, anyone can transform ideas into compelling visual content and stay competitive in the evolving digital landscape.

Leave a Comment

Create your review for HitPaw articles