Seedance vs Wan 2026: Features, Performance, and Verdict

AI Overview: Discover which AI video generator dominates in 2026 and learn the ultimate secret to achieving flawless 4K quality.

The AI video generation landscape is evolving rapidly. As creators search for the best AI video generator 2026, the debate often comes down to Seedance vs Wan.

Both models push the boundaries of digital creation, offering stunning visuals and dynamic motion. However, raw outputs often suffer from low resolution and digital noise. Let's explore which tool truly fits your workflow today.

Quick Decision: Which One Fits Your Workflow? Seedance or Wan?

When evaluating Seedance vs Wan, the right choice depends heavily on your technical expertise and end goals. Whether you are a professional editor, a fast-paced content creator, or a beginner, aligning the software with your specific user persona is critical for maximizing efficiency.

Choose Seedance If:

- You are a Content Creator or Marketer: You need rapid, stylized content generation for social media platforms without dealing with complex technical setups.

- You prioritize speed over absolute realism: You prefer a highly optimized, commercially viable platform that delivers quick turnaround times.

- You want an accessible interface: You prefer working within a streamlined web UI rather than navigating node-based architectures.

Choose Wan If:

- You are a Professional Editor or AI Enthusiast: You are comfortable pushing the boundaries of open-source technology and don't mind complex backend configurations.

- You demand cinematic physics: You need superior prompt adherence, dynamic camera movements, and highly accurate physical interactions in your footage.

- You have the hardware: You possess high-end GPUs (like an RTX 4090) required to run Wan 2.1 video generation locally or are willing to pay for premium cloud computing.

At a Glance: Key Differences & Pricing of Seedance and Wan

| Feature | Seedance | Wan (Wan 2.1) | HitPaw VikPea (Alternative/Enhancer) |

|---|---|---|---|

| Price | Subscription-based (Tiered) | Open-Source (Free, but high compute cost) | Freemium / Subscription |

| Best For | Social Media Creators, Marketers | Pro Editors, AI Researchers | All Users (Generation & Post-Processing) |

| Key Tech | Highly optimized commercial diffusion | Advanced Spatiotemporal Transformers | Multi-Model AI Generation & 4K Upscaling |

| Max Native Output | 1080p (Compressed) | 720p / 1080p (VRAM dependent) | 4K / 8K (via Localized AI Upscaling) |

Overview of Seedance and Wan

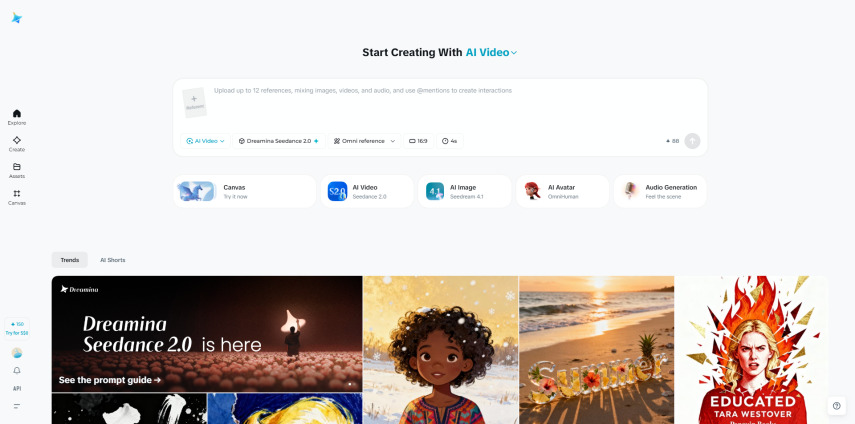

Seedance Overview

Seedance has rapidly established itself as a commercial powerhouse in the AI video space. Often associated with highly optimized proprietary models, it is designed for mass accessibility.

Seedance AI reviews frequently highlight its ability to generate vibrant, stylized clips in record time. It is the go-to tool for marketers who need B-roll, social media shorts, and rapid visual prototyping without worrying about backend coding.

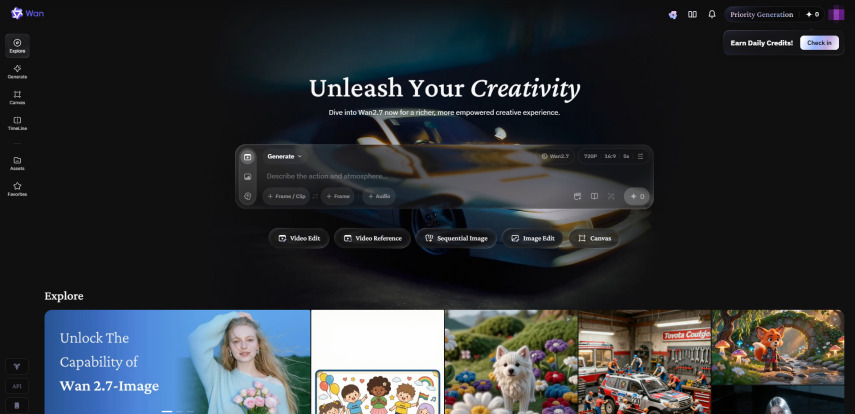

Wan Overview

Wan, specifically the Wan 2.1 model, represents the bleeding edge of open-source video generation. It has garnered massive attention in the AI community for its unparalleled understanding of real-world physics, complex lighting, and strict prompt adherence.

While it requires significant computational power and is often run via ComfyUI or GitHub scripts, it is widely considered a top contender for the best AI video generator 2026 among technical professionals.

The Post-Processing Reality Overview

Despite the incredible advancements of both Seedance and Wan, a dirty secret remains in the 2026 AI video industry. Neither tool produces production-ready, flawless 4K video natively.

High-resolution rendering is computationally expensive, meaning raw outputs from both generators frequently suffer from 720p limitations, digital noise, and the uncanny valley effect on human faces. Therefore, a third category of tool-the dedicated AI video enhancer-has become mandatory in professional workflows.

Seedance vs Wan: Feature-by-Feature Comparison

To truly understand the Seedance vs Wan debate, we must look beyond surface-level marketing and segment our comparison based on the core dimensions that impact a creator's daily workflow.

Features

- Comparison Content: Wan 2.1 excels in complex prompt adherence. If you prompt a "glass shattering in slow motion while reflecting neon lights," Wan understands the physics of the glass and the lighting dynamics. Seedance, conversely, excels in stylized, artistic interpretations. It handles rapid scene transitions beautifully but occasionally struggles with complex, multi-layered physical interactions.

- Concluding Statement: Wan offers superior physics and prompt accuracy for cinematic needs, while Seedance provides excellent stylized versatility for commercial content.

Quality

- Comparison Content: Both models face the historical bottleneck of rendering costs, typically maxing out at 720p or 1080p. Wan produces highly cinematic lighting but can introduce heavy digital noise in fast-moving scenes. Seedance produces cleaner lines but frequently falls victim to the uncanny valley, where human faces exhibit a smooth, unnatural plastic skin texture.

- Concluding Statement: Both generators offer impressive base quality, but both inherently require post-processing to fix AI generated video artifacts and achieve true realism.

Speed

- Comparison Content: Seedance is hosted on highly optimized commercial servers, allowing users to generate 5-second clips in under a minute. Wan 2.1, being a massive open-source model, requires intensive VRAM. Running it locally on a 24GB VRAM GPU can take several minutes per clip, and cloud-based generation is subject to queue times and server loads.

- Concluding Statement: Seedance is the undisputed winner for speed and rapid iteration, whereas Wan requires patience for its cinematic outputs.

Pricing

- Comparison Content: Seedance operates on a standard SaaS subscription model, offering predictable monthly costs for a set number of credits. Wan is open-source and technically free. However, the hidden costs lie in the hardware required to run it or the API/cloud GPU rental fees (such as RunPod), which can quickly add up for heavy users.

- Concluding Statement: Seedance offers predictable commercial pricing, while Wan is free but demands significant hardware or cloud computing investments.

Ease of Use

- Comparison Content: Seedance offers a polished, user-friendly web interface where users simply type a prompt and click "generate." Wan 2.1 often requires users to navigate ComfyUI workflows, install Python dependencies via GitHub, or use third-party host sites that vary in reliability.

- Concluding Statement: Seedance is vastly more accessible for beginners, whereas Wan presents a steep learning curve suited for technical users.

Seedance vs Wan: Performance Tests / Real-World Test

To provide an objective analysis, we bypassed subjective adjectives and conducted scenario-based testing using quantifiable metrics.

- Test Environment: Local machine equipped with an NVIDIA RTX 4090 (24GB VRAM), 64GB RAM, and cloud-based Seedance enterprise access.

- Test Material: Standardized 5-second generation prompts, outputting at native resolutions.

Scenario 1 (Cinematic Landscape)

- Prompt: A sweeping drone shot over a futuristic neon city at midnight, rain falling, reflections on the metallic streets.

- Wan 2.1 Result: The model perfectly captured the physics of the rain and the neon reflections. Generation time was 4 minutes 12 seconds at 720p resolution. The primary issue was heavy digital noise in the darker areas of the frame.

- Seedance Result: The city looked incredibly vibrant and stylized, though the rain physics were slightly less realistic. Generation time was 45 seconds at 1080p compressed resolution. The primary issue was slight motion blur during the fast camera sweep.

Scenario 2 (Portrait / Animation)

- Prompt: Close up of a woman smiling in natural sunlight, wind blowing through her hair, highly detailed.

- Wan 2.1 Result: Excellent hair physics and dynamic lighting were achieved. Generation time was 3 minutes 50 seconds. The issue was that the face exhibited slight structural morphing as she smiled, triggering the uncanny valley effect.

- Seedance Result: Fast generation took only 40 seconds. The lighting was beautiful, but the subject's skin lacked pores and texture, resulting in a distinct plastic appearance common in commercial models.

Seedance vs Wan: User Feedback / Community Reviews

To maintain objectivity, we aggregated feedback from Reddit (r/LocalLLaMA, r/AIVideo), Quora, and various AI filmmaking forums to see what real users are saying about Wan AI alternatives and Seedance.

- Seedance Feedback: Users consistently praise the platform's speed and user-friendly interface. Many marketers note that it is the most reliable tool for generating daily social media shorts. The most frequently cited drawback is the lack of granular control over camera movements and the occasional AI look (smooth, plastic skin) on human subjects.

- Wan 2.1 Feedback: The community is highly enthusiastic about Wan's cinematic capabilities. Filmmakers on Reddit frequently highlight its superior adherence to complex, multi-sentence prompts. Conversely, users complain heavily about the VRAM requirements. Furthermore, a major pain point is Wan 2.1 noise reduction, as users state that while the motion is great, the raw 720p files are too noisy for professional client work without extensive post-processing.

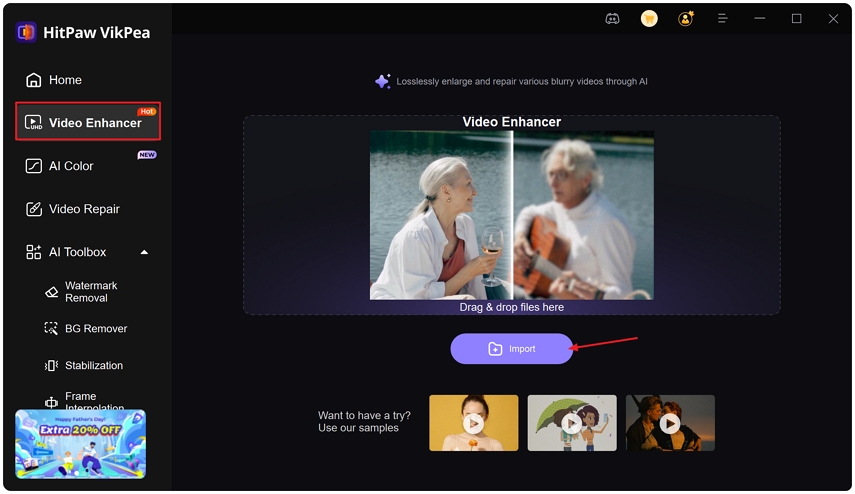

Alternative Tool: HitPaw VikPea - Best Balance for Most Users

While comparing generators is important, the reality of 2026 is that a hybrid workflow is required. If you want the best AI video post-processing and generation experience in one place, HitPaw VikPea offers the ultimate balance of ease of use, functional depth, and value for money.

HitPaw VikPea is a comprehensive platform designed for both AI generation and AI enhancement. Instead of locking you into one model, VikPea integrates multiple top-tier AI generation models natively, including Kling O1, Seedance2.0, and Pixverse 5.5.

Furthermore, while Seedance and Wan are incredible at generating video, their outputs inherently possess flaws. These common issues include unnatural, plastic-like human figures, low 720p resolutions, or inaccurate colors.

HitPaw VikPea serves as the essential supplementary tool. It acts as an AI video enhancer that takes your raw, flawed AI generations and transforms them into flawless, production-ready masterpieces.

- Ease of Use: A simple, intuitive interface requiring zero coding knowledge.

- Multiple AI Video Models: Generate videos using built-in Seedance, Kling, and Pixverse models.

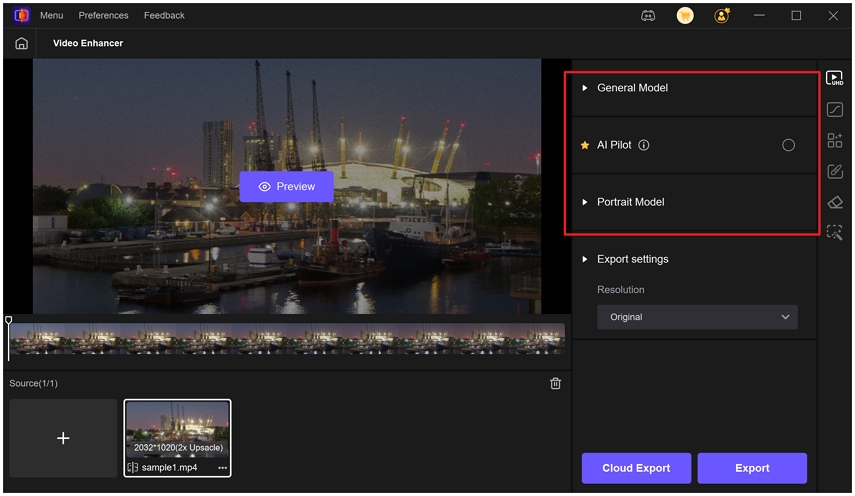

- AI Video Enhancer: Features multiple localized AI models (Portrait, Color Enhancement, Denoise) to fix uncanny valley AI video artifacts.

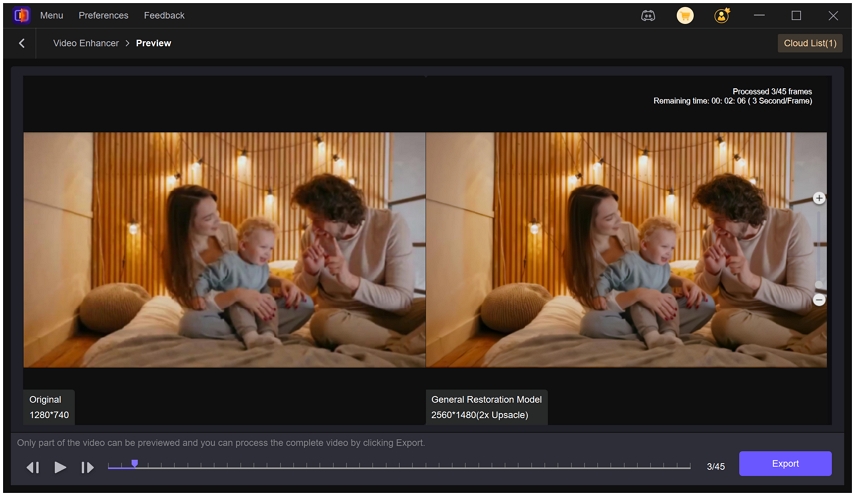

- Split Screen Preview: Instantly validate enhancements before exporting.

Step-by-Step Tutorial to Enhance AI Generative Videos

- Step 1: Download and launch HitPaw VikPea, then select the Video Enhancer feature from the main dashboard.

- Step 2: Import your raw AI-generated video file into the workspace by dragging and dropping it directly.

- Step 3: Choose the appropriate AI model, such as the Portrait Model or General Model, for enhancement.

- Step 4: Use the split-screen preview to compare the original and enhanced video, then click export to save.

FAQs about AI Video

Wan 2.1 excels in cinematic physics and prompt adherence, making it ideal for professionals. However, Seedance offers faster generation times and optimized commercial workflows. The choice depends entirely on whether you prioritize open-source flexibility or rapid, stylized social media content creation.

To upscale AI video to 4K, use a dedicated AI video enhancer like HitPaw VikPea. Simply import your low-resolution generation, apply the General Denoise Model, and let the software losslessly upscale the footage to 4K or 8K while removing digital noise.

You can fix uncanny valley AI video artifacts by applying facial restoration software. HitPaw VikPea features a dedicated Face Model that recovers natural skin textures, removes the artificial plastic look, and restores realistic human features in your AI-generated portraits with one click.

The best AI video post-processing software seamlessly combines upscaling, denoising, and color correction. HitPaw VikPea stands out by offering multiple enhancement models and a split-screen preview, making it incredibly easy to fix AI generated video flaws and elevate your content's quality.

Final Verdict

The battle of Seedance vs Wan in 2026 proves that AI video generation has reached incredible new heights.

- For Beginners and Marketers: Seedance is the clear winner due to its rapid generation speeds, stylized outputs, and user-friendly web interface.

- For Professionals and AI Enthusiasts: Wan 2.1 offers unmatched cinematic physics, prompt adherence, and open-source flexibility.

However, the most crucial takeaway for any creator is understanding the limitations of raw AI generation. Whether you choose the speed of Seedance or the cinematic depth of Wan, you will inevitably encounter the 720p/1080p resolution bottleneck, digital noise, and the dreaded uncanny valley effect.

For a truly balanced workflow, relying on a comprehensive tool like HitPaw VikPea is the smartest choice. Not only does it allow you to generate videos using top-tier models like Seedance and Kling natively, but its powerful AI enhancement suite ensures that you can remove noise from AI video, restore realistic facial textures, and upscale your final product to stunning 4K. By integrating a dedicated enhancer into your workflow, you bridge the gap between raw AI experimentation and professional, client-ready video production.

Leave a Comment

Create your review for HitPaw articles