DeepSeek V4 Released: Features, Pricing & Whats New

DeepSeek V4 is officially released as a major step forward in large language models, introducing a 1M token context window, lower-cost inference, and improved long-term memory capabilities. The model is designed to make long-document understanding, full-codebase analysis, and multi-session AI agents far more practical and scalable. Early technical analysis highlights several key innovations, including a tiered KV cache architecture (MODEL1 concept), sparse FP8 mixed-precision decoding, an advanced memory module for persistent recall, and optimized residual connections for faster training and better efficiency.

Part 1. What Is DeepSeek V4?

DeepSeek V4 is the latest generation of Mixture-of-Experts (MoE) large language models, officially open-sourced on April 24, 2026. It represents a massive leap from V3, scaling up to 1.6 trillion parameters (Pro version) while maintaining incredible efficiency with only 49B active parameters per token.

Built for the "Long-Context Era," V4 is the first open-source model to natively support a 1-million-token window with near-perfect retrieval, powered by the new HCA (Heavily Compressed Attention) architecture and the high-efficiency Muon optimizer.

Part 2. DeepSeek V4: What Can the New Architecture Do for You?

DeepSeek V4 isn't just a larger model; it’s a more functional one. By decoupling static knowledge from active reasoning through its new HCA and CSA architecture, V4 excels in scenarios that were previously too "heavy" for AI.

1. Repository-Level Engineering & Coding

Traditional models struggle when you upload a whole folder. V4’s 1M context window and HCA (Heavily Compressed Attention) allow it to maintain a map of your entire project. It doesn't just suggest lines of code; it understands cross-file dependencies, making it a true "AI Senior Architect" for complex bug fixing and feature refactoring.

2. Persistent AI Agents with "Long-Term Memory"

The integration of CSA (Compressed Sparse Attention) allows V4 to function like it has a hard drive for its brain. In multi-session chats, the model remembers your specific style guides, previous project decisions, and even nuanced brand preferences without needing to re-read the entire history every time.

3. Low-Latency Reasoning at Trillion-Scale

Thanks to the Muon Optimizer and Native FP8 mixed-precision, V4 delivers "Pro" level intelligence at "Flash" speeds. This means complex reasoning—like legal document analysis or creative brainstorming—happens in seconds, not minutes, significantly lowering the barrier for high-volume enterprise use.

4. Advanced Multimodal Creative Workflows

As a native multimodal model, V4 can "see" and "write" simultaneously. For creators using tools like HitPaw VikPea, this means the AI can analyze a 10-minute raw video clip, understand the emotional arc, and then generate perfectly timed scripts or enhancement prompts to polish the footage.

Part 3. Is DeepSeek V4 Available Now?

Yes. As of April 24, 2026, DeepSeek has released the preview version. Developers can access the API via DeepSeek’s official platform or download the weights from Hugging Face for local deployment.

- DeepSeek-V4-Pro: For high-end reasoning and complex coding.

- DeepSeek-V4-Flash: Optimized for speed and cost ($0.14 / 1M tokens).

Part 4. DeepSeek V4: Pricing & API Advantages

One of the most disruptive aspects of the DeepSeek V4 release is its pricing strategy. DeepSeek continues to undercut the market, offering "Frontier-level" intelligence at a fraction of the cost of its Western competitors.

1. Industry-Leading Affordability

DeepSeek V4 provides two main API tiers tailored for different scales of production:

- DeepSeek-V4-Flash: Optimized for speed and high-volume tasks. At $0.14 per 1M input tokens, it is approximately 5x-10x cheaper than GPT-4o or Claude 3.5 Sonnet for similar reasoning tasks.

- DeepSeek-V4-Pro: Their flagship model. Even with 1.6T parameters, the price stays around $1.74 per 1M tokens, making elite-level reasoning accessible for indie developers and startups.

2. The "Long-Context" Saving

Thanks to HCA (Heavily Compressed Attention), DeepSeek V4 significantly reduces the "KV Cache" overhead. For users, this means:

- Lower costs for long sessions: Analyzing a 1M token document costs significantly less on V4 than on other models because the architecture is optimized for long-context efficiency.

- Faster First-Token Latency: Even with massive inputs, the API responds faster, reducing the wait time for end-users.

3. Seamless API Integration

The DeepSeek V4 API remains OpenAI-compatible. This means if you are already using tools or scripts for GPT-4, you can switch to DeepSeek V4 by simply changing the base_url and API key.

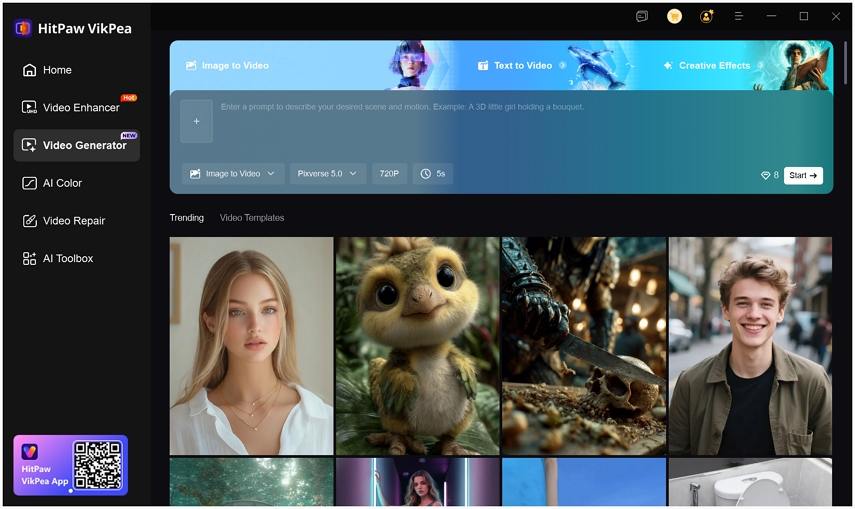

Bonus Tips. Create Stunning AI Videos with HitPaw VikPea AI Video Generator

If you want a hands-on, desktop-friendly way to test modern generative workflows while waiting for model news, HitPaw VikPea offers text-to-video and image-to-video generation plus powerful enhancement tools. VikPea combines multiple creative models, easy preset controls, and frame-level enhancement so creators can build short clips, transform images into motion, or polish footage before publishing. It is a pragmatic pick for creators who need quick, high-quality visual outputs without deep ML expertise.

- AI video generation from text or images for fast creative video production.

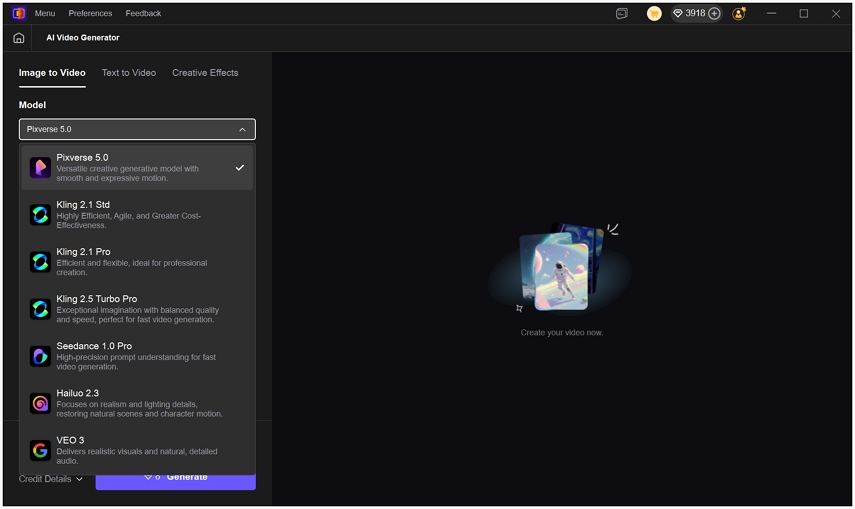

- Multiple AI models optimized for different styles and aesthetic directions.

- Customizable resolution and duration settings to control final video length and size.

- Built-in enhancement pipeline boosts clarity, color, sharpness, and noise reduction.

- User-friendly interface designed for rapid results without technical expertise.

- Batch processing and format support for professional workflows and large files.

Step 1.Install and open VikPea on Windows or Mac, then choose the AI Video Generator tool from the main menu.

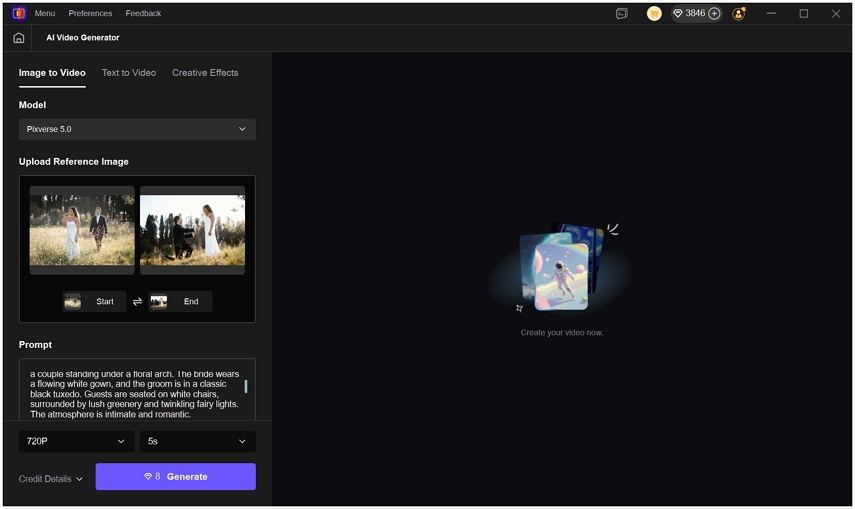

Step 2.Enter a text prompt or upload images: pick Text-to-Video for prompt-driven clips or Image-to-Video for frame-based motion.

Step 3.Select a model and adjust output settings such as duration, resolution, and style controls.

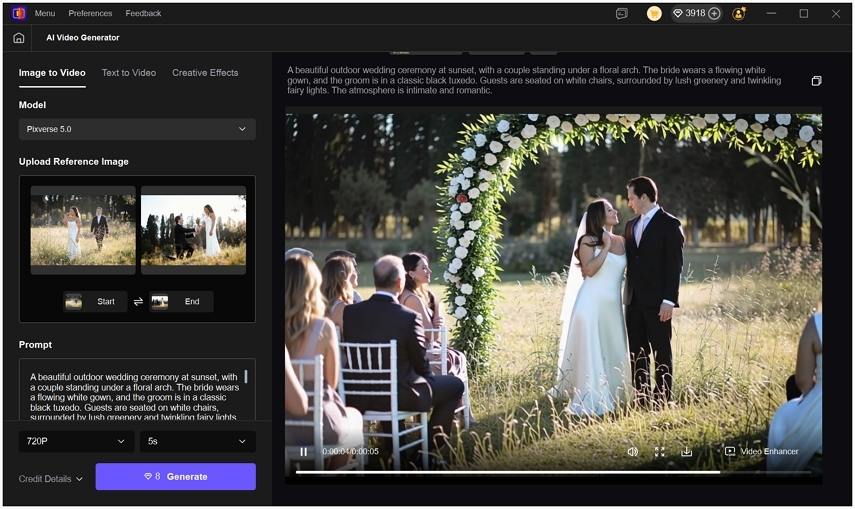

Step 4.Click Generate, preview the result, then save or run the built-in enhancer for final polish.

Part 6. Frequently Asked Questions on DeepSeek V4

Early benchmarks show V4-Pro outperforming GPT-5 in specific coding and mathematics tasks, particularly in long-context scenarios where HCA architecture provides a significant edge.

DeepSeek V4-Flash is now the most affordable "frontier" model at $0.14 per 1M input tokens, making it nearly 50% cheaper than V3.

You can find the official weights for both Pro and Flash versions on the DeepSeek Hugging Face repository.

Conclusion

DeepSeek V4 is a pivotal release that prioritizes practical scale. With 1.6T parameters and a 1M context window, it transforms AI from a simple chatbot into a persistent teammate. While others focus on closed ecosystems, DeepSeek’s open-source approach with V4 sets a new standard for the entire AI industry.

Leave a Comment

Create your review for HitPaw articles